DeepSeek-R1: pure reinforcement learning (RL), no supervised fine-tuning (SFT), no chain-of-thought (CoT)

#1minPapers

In addition to open source, DeepSeek-R1 is significant because it’s complete reinforcement learning (RL), no supervised fine-tuning (SFT)(“cold start”). Reminiscent of AlphaZero (which mastered Go, Shogi, and Chess from scratch, without playing against human grandmasters).

The secret sauce is rewards: ground truth computed by hardcoded rules. Learned rewards can easily be hacked by RL.

Uses Group Relative Policy Optimization (GRPO) instead of Proximal Policy Optimization (PPO): foregoes the critic model that is typically the same size as the policy model, instead estimates the baseline from group scores, using the average reward of multiple samples to reduce memory use.

Emergent properties:

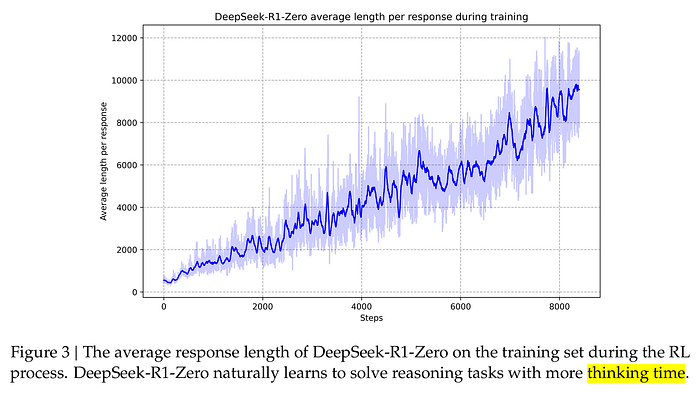

- Thinking time steadily improved throughout the training process:

- Self-reflection and exploration behaviors

- Code and paper on Github: https://github.com/deepseek-ai/DeepSeek-R1